Ever wonder if there was a way to quantify if the green button on your website homepage really does convert better than the red button? Are you curious about testing a second tagline idea to see if it resonates better with your online visitors? Or maybe you’re looking for a way to quantify marketing and improve your ROI?

There is a way to do all this and more: A/B testing.

Many marketers guess what they feel works best, but digital tracking tools allow you to remove the guesswork and test what elements of your website work best. Let’s look closer at how A/B testing works and how it can help you improve digital marketing results. It’s a highly valuable tool marketers should use to test assumptions and improve results.

What is A/B testing?

A/B testing, at its core, is simply testing one website or content element against another and measuring the results to see which one performed better. To do this, you need two things:

- An element to test

- A conversion based on that element.

The A test is version one of the test, and the B test is the second version. You can repeat this as you introduce new variations to the test.

An A/B Test Example

Let’s consider a button for our sample test. Your site has a form on your page with a red button. The button is our element, and completing the form is our conversion. You are getting decent conversions, but there is always room for improvement. With A/B testing, you can test a second button color and, based on the results, see if a different color results in a better conversion rate.

To set this up, you would create a second element (in this case, a second button color green) and, using a plugin or other A/B testing tool – serve up both pages to visitors, split at 50% for each. So, half the visitors will see the form with our existing red button (A test), and half will see the new green button (B test).

How long do I run the test?

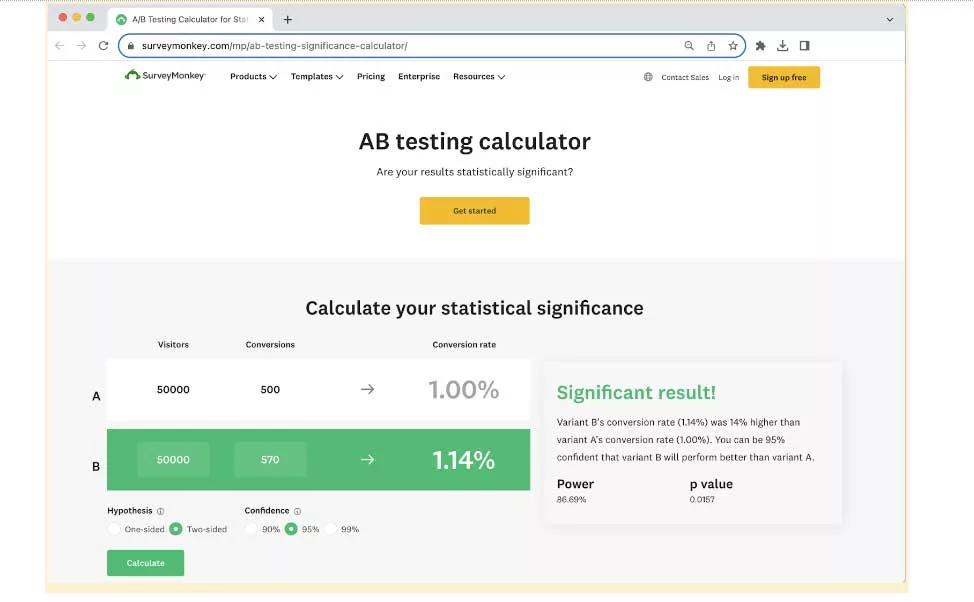

An A/B test needs to run long enough to generate statistically significant results. What are statistically significant results? You need a certain number of visitors to ensure the test results are based on enough visitors that a clear winner emerges. Having only ten visits to your website for a test will not offer meaningful results. Having 4000 visitors to your site will. (Note – this number varies, so using the tool is important.) So, the key is to let the test run long enough and get enough visitors to generate a valid result. Here is a website you can use to check your numbers to ensure you have hit the mark.

Crunching the Numbers

So, we have run our hypothetical test, and the numbers are in. The green button won. While the original site showed a conversion rate of 3%, that number went to 7% for users seeing the green button. Since the button is the only change on the page, we can safely attribute the result to the button color.

Keep the Data and Test Results Accurate

And therein lies the key to successful A/B testing. You want to test one element. The more elements you test, the more unknowns you introduce. If you are testing four elements, you now have to serve up 24 different pages to account for the combinations of four elements (the formula is x! where x is the number of elements). This can quickly become a nightmare to manage, as you now have to track 24 pages and decipher which combination worked. By testing one element at a time, you can eliminate any other factors and narrow it down to the one item you are trying.

What can I test? More than just buttons!

- Taglines

- Content blocks

- Placement of a form on a page

- Two different images

- Different CTA verbiage

- Different form fields

- And much more

What if A/B testing is inconclusive?

It’s worth noting that not all A/B testing yields black-and-white answers; sometimes, data is inconclusive. When faced with this challenge, approach the results with a strategic mindset:

- Review your testing parameters to ensure they were accurately implemented.

- Consider extending the testing duration to gather more data.

- Explore qualitative feedback, such as user behavior, for nuanced insights.

- Brainstorm alternative hypotheses and, if necessary, change your variables and conduct more testing.

In the absence of a clear winner, these tips will help support your understanding so you can make informed decisions when fine-tuning your digital strategies.

Trust the Data

With most A/B testing, you take the guesswork and hunches out of the equation and instead rely on black-and-white data. The results may show that long-held beliefs in colors, CTA verbiage, etc., may only sometimes hold true on your site. In the end, the numbers don’t lie.

Ready to put your assumptions to the test? Unlock the full potential of your digital presence and find clarity with a one-on-one website consultation with our design and development team at Intuitive Websites. Don’t guess, test! Contact us today.